A recent report claimed that an anonymous company accidentally spent $500 million on Anthropic’s Claude in a single month after failing to put usage limits on employee access.

That number is absurd. For most small businesses, it sounds so far removed from reality that it is easy to laugh it off and move on, but that would be the wrong lesson.

The point is not that your business is going to wake up tomorrow with a half-billion-dollar AI bill. The point is that AI has introduced a new kind of business risk: fast-moving, employee-driven, poorly governed software usage that can create cost, security, compliance, and operational problems before leadership even knows what is happening.

Small businesses do not need a Fortune 500 AI budget to make Fortune 500 AI mistakes. They just make them at a smaller scale, and sometimes a smaller mistake hurts more because there is less financial room to absorb it.

AI adoption is moving faster than AI strategy, your employees are already using AI. They are using ChatGPT, Claude, Copilot, Gemini, browser extensions, AI note takers, AI writing tools, coding assistants, image generators, meeting bots, inbox assistants, and whatever else promises to save them time.

Some of this is good. AI can absolutely improve productivity. It can help write first drafts, summarize documents, review contracts, organize meeting notes, analyze spreadsheets, draft client communications, troubleshoot technical problems, and speed up repetitive work.

The problem is not AI usage, the problem is unmanaged AI usage.

Many businesses are still treating AI as a novelty or a personal productivity tool, while employees are already treating it like infrastructure. That gap is where the risk lives.

If employees are using AI tools without clear rules, approved platforms, data handling guidance, spending controls, and accountability, the business has not adopted AI strategically. It has simply allowed AI to spread.

That is not a strategy. That is drift. The reported Claude incident is a perfect example of what happens when access is confused with strategy.

Giving employees access to powerful AI tools can be valuable, but access alone does not answer the most important questions.

Who is allowed to use the tool?

What business problems should it be used for?

What data is allowed to go into it?

What data is prohibited?

Who owns the output?

How is usage monitored?

How are costs capped?

How do we measure whether this is actually helping?

Without answers to those questions, at best AI becomes another unmanaged business expense. At worse, it becomes an unmanaged business process.

That matters because modern AI tools are not like traditional software subscriptions. A normal SaaS tool usually has a predictable monthly cost per user. AI can be different. Depending on the platform, plan, API model, agentic workflow, integrations, automation, and volume of usage, costs can scale quickly. The more powerful the workflow, the more important governance becomes.

This is especially true with AI agents and coding assistants. These tools do not just answer one question and stop. They can perform multi-step tasks, generate large amounts of output, run repeated analysis, review codebases, process documents, or interact with other systems. That can be useful, but it also means the cost and risk can grow quietly in the background.

For a small business, the danger is not a $500 million invoice. The danger is paying for tools no one is managing, letting sensitive data leak into platforms that were never approved, relying on AI-generated work no one reviews, or building business processes around accounts the company does not control.

Some businesses will hear stories like this and decide the safest move is to block AI entirely. That is understandable, but it is usually not realistic. If AI tools help employees do their jobs faster, people will find ways to use them. If the business does not provide an approved path, employees may create their own path. That is how shadow IT happens. The better approach is not panic, it is governance.

AI governance does not need to be complicated. For most small businesses, it should start with practical controls that match the size of the company. A good small business AI strategy should include:

- Approved AI tools and platforms

- Clear rules for what data can and cannot be entered

- Spending limits and usage monitoring

- Role-based access for employees

- Human review for important AI-generated work

- Policies for client data, financial data, health data, legal documents, credentials, and confidential information

- A process for evaluating new AI tools before employees start using them

- A way to measure whether AI is saving time, improving quality, or reducing cost

That last point is critical. AI should not be adopted because it is exciting. It should be adopted because it solves a real business problem.

If an AI tool saves five hours per week, improves response times, helps generate better proposals, reduces administrative work, or improves customer service, that is useful. If it creates more subscriptions, more confusion, more risk, and more low-quality output, it is not innovation. It is clutter.

Cost control is only one part of the strategy, the Claude story is dramatic because the dollar amount is dramatic. But for small businesses, cost is only one part of the AI risk picture. The bigger issue may be data control. Employees may paste client emails, contracts, tax documents, HR issues, financial records, passwords, source code, internal strategy, vendor disputes, or customer lists into AI tools without realizing the consequences.

That does not mean every AI platform is unsafe. Some enterprise AI platforms provide stronger privacy, security, and data handling protections than consumer-grade tools. But the business needs to know which tools are being used and under what terms. This is where small businesses need to be honest with themselves. If employees are using free personal AI accounts to process company information, the company probably does not have enough visibility or control.

That creates real questions.

- Where is the data going?

- Is it being used for model training?

- Can the company audit usage?

- Can access be revoked when an employee leaves?

- Is multifactor authentication enforced?

- Are files being uploaded?

- Are browser extensions reading sensitive pages?

- Are AI meeting bots recording confidential conversations?

These are not theoretical concerns. They are the same kinds of basic governance questions businesses already ask about email, file sharing, password managers, CRMs, and accounting systems. AI should be treated with the same seriousness. A small business does not need to start with a grand AI transformation plan. It should start with a simple question: Where can AI safely and measurably improve the business?

That might mean using AI to draft marketing content, summarize long documents, build internal SOPs, assist with help desk responses, analyze sales data, improve customer communication, or speed up research. Start with real use cases. Then match the tool to the use case. Then apply controls.

A practical AI rollout might look like this:

- Identify the top three repetitive tasks employees spend too much time on.

- Choose one approved AI platform for business use.

- Define what data is allowed and prohibited.

- Set user access, billing limits, and administrative ownership.

- Train employees on safe and effective usage.

- Review results after 30 to 60 days.

That is not flashy, but it works. The goal is not to use AI everywhere. The goal is to use AI where it produces value without creating unnecessary risk. AI should be managed like any of your other business systems. The biggest mistake small businesses can make is treating AI as something outside normal IT and business management. It is not.

AI touches identity, security, compliance, finance, operations, HR, sales, marketing, customer service, and intellectual property. That means it needs ownership. Someone needs to be responsible for deciding which tools are approved, how accounts are managed, how data is protected, how employees are trained, how spending is reviewed, and how the business measures results. For many small businesses, that responsibility should involve leadership, IT, and whoever owns the affected business process.

For example, marketing should help define AI use in content creation. Finance should care about billing and invoice-related AI usage. HR should care about employee data. IT should care about access, security, logging, and data protection. Leadership should care about the overall business value. AI is too powerful to be left entirely to individual preference.

The reported $500 million Claude bill is not just a story about one company’s lack of spending controls. It is a warning about what happens when AI adoption outruns AI management. Small businesses should not avoid AI. That would be shortsighted, but they should also not let AI creep into the business through personal accounts, unmanaged tools, unclear policies, and uncapped spending. The right approach is controlled adoption.

Use AI. Encourage experimentation. Look for productivity gains. But put guardrails in place. Decide which tools are approved. Protect sensitive data. Set spending limits. Train employees. Review usage. Measure outcomes. Keep humans responsible for important decisions. AI can be a real advantage for small businesses, especially the ones willing to use it thoughtfully. But like every powerful tool, it needs rules.

The companies that get this right will not be the ones that blindly chase every new AI feature. They will be the ones that build AI into their business with discipline, security, and a clear purpose. That is the lesson small businesses should take from the Claude story. AI without strategy is just another unmanaged expense. AI with strategy can become an advantage. At Valley Techlogic, we can be your strategic partner as you roll out AI in your business and help prevent costly mistakes like the one in this article. Learn more today with a consultation.

Looking for more to read?

- AI is making fraud easier and more lucrative, with AI enabled phishing emails seeing 25% higher open rates than human crafted variations

- Agentic search? Google’s annual conference I/O revealed new features coming to search, and how your personal data may integrate into it

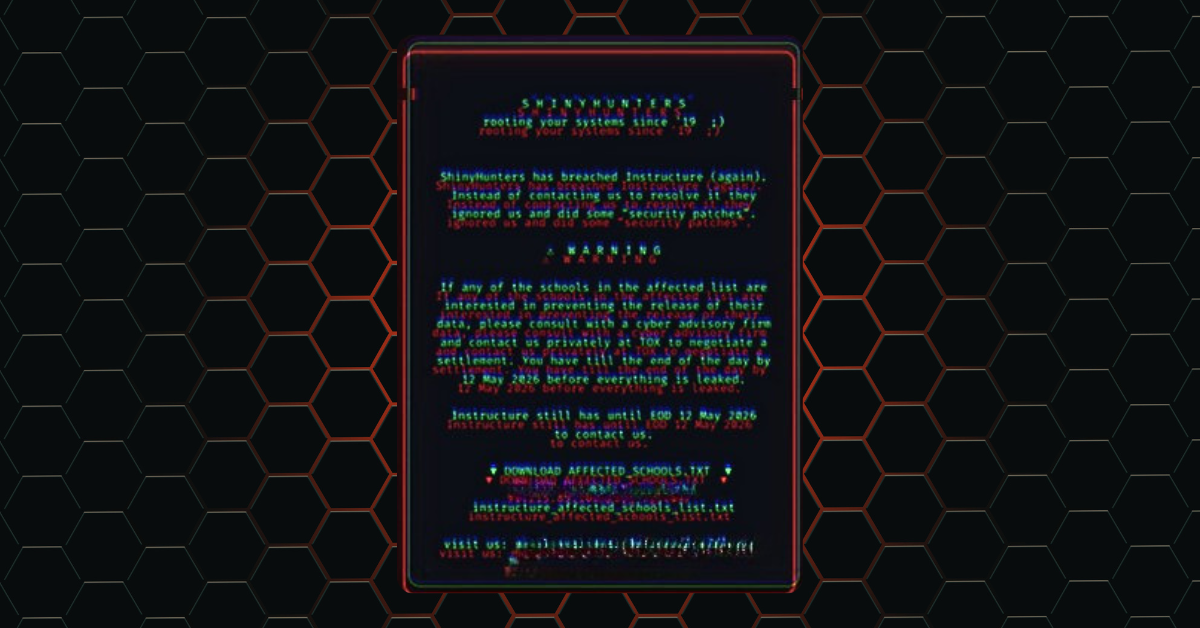

- Education platform Canvas reached settlement with ransomware group “ShinyHunters” to prevent the release of data affecting 275 million users

- New malware dubbed “NoVoice” infiltrates the Google Play Store and infects 2.3 million devices

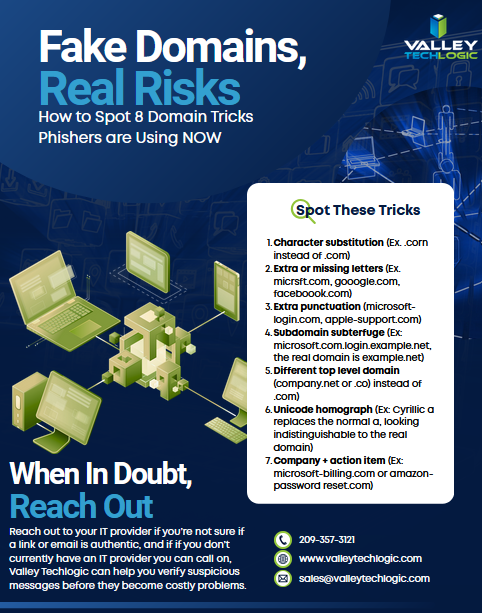

- .corn or .com? Domain scams are getting trickier, here’s how you spot them

This article was powered by Valley Techlogic, leading provider of trouble free IT services for businesses in California including Merced, Fresno, Stockton & More. You can find more information at https://www.valleytechlogic.com/ or on Facebook at https://www.facebook.com/valleytechlogic/ . Follow us on X at https://x.com/valleytechlogic and LinkedIn at https://www.linkedin.com/company/valley-techlogic-inc/.